Published OnApril 28, 2026April 19, 2026

Building an LLM Wiki for Ditto: How a Mac Mini Became My Second Brain

How I built a Karpathy-style LLM wiki for Ditto on a Mac mini with Claude Code skills and local hybrid search. Template on GitHub.

An LLM wiki is a persistent, LLM-maintained knowledge base built in markdown that replaces traditional RAG pipelines with pre-assembled context. I built one for Ditto and it's become the system I use to organize everything I know about the business, research topics before writing, and keep our messaging consistent. This post covers how I set it up and why you might want to build one for your company.

I'd been watching the open-source AI agent space for months. Projects like OpenClaw were interesting: 200+ LLM backends, thousands of community skills, real flexibility. But my wikis hold customer details, board prep, competitive positioning. Running a community skill registry against that data is a supply chain risk I couldn't get comfortable with (more on this in Part 3).

Then two things happened. Anthropic shipped Remote Control and Scheduled Tasks for Claude Code in early 2026, which gave the managed agent the infrastructure I actually needed: mobile access, cron-like jobs, and a trust model I could vet. And Andrej Karpathy published a gist that reframed how I thought about the knowledge problem.

Key Takeaways:

- Karpathy's LLM-wiki pattern replaces retrieval with a persistent, LLM-maintained markdown knowledge base that compounds over time

- Separating internal company knowledge from external marketing content requires two wikis with different audiences and different risk profiles

- The core operations are ingest (process sources), query (search and synthesize), and lint (health-check for contradictions and stale data)

- The template is open source at github.com/bigfish24/llm-wiki-template. Start with it, customize for your domain

- Local hybrid search (BM25 + vector + LLM reranked) runs fast enough on a Mac mini that cloud RAG is unnecessary for personal or SMB scale

Karpathy's LLM wiki pattern in 200 words

Instead of retrieving raw documents on every query, you have the LLM read sources once, extract the important information, and integrate it into an evolving markdown knowledge graph. The output is a wiki: structured pages with cross-references, entity profiles, topic hubs, and a consistent schema.

Three layers:

- Raw sources -- the immutable collection of articles, PDFs, transcripts, research papers that come in

- The wiki -- LLM-generated markdown files: summaries, entity pages, topic hubs, proof points, all cross-linked

- The schema -- a configuration file (in my case, CLAUDE.md) that defines the wiki's structure, frontmatter conventions, and agent workflows

Three verbs:

- Ingest: read a source, extract entities and facts, create or update wiki pages, log the work

- Query: search the wiki, synthesize an answer with citations, optionally file useful results back as new pages

- Lint: periodic health checks for contradictions, orphan pages, stale claims, missing links

As Karpathy put it: "The wiki is a persistent, compounding artifact." The human curates sources and asks good questions. The LLM does everything else.

This is context engineering, not retrieval. The wiki is the assembled context.

Why this replaces RAG (for CEOs and CTOs)

RAG is not dead. It's evolving into what the industry now calls context engineering. Gartner declared 2026 "the year of context." The New Stack called context the real bottleneck in AI coding: "not compute, not model size, but our ability to capture and convey the implicit knowledge that makes AI systems actually useful."

The modular RAG pipeline, with Self-RAG, Corrective RAG, and agentic orchestration, works well for large-scale enterprise search. If you need to query millions of documents in real time, you need a retrieval pipeline.

But most CEOs and CTOs don't have that problem. We have a few hundred sources, maybe a few thousand, and what we actually need is for the AI to know our business deeply. To remember that we closed a specific deal three months ago. To know our competitor's positioning changed last quarter. To understand that one customer reference is public and another is NDA-only.

RAG can't do that well because every query starts from scratch. Nothing compounds. The AI has no memory of what it learned last time.

Karpathy's LLM wiki is context engineered up front. The wiki's organization does the retrieval work continuously, so when you query it the LLM reads exactly what it needs because pages are pre-structured, pre-linked, and pre-summarized.

If your company has something to say publicly and something to know privately, you probably need a wiki, not a pipeline.

My setup: a Mac mini and two wikis

I run two separate wikis on a Mac mini that sits in my office.

The external wiki is the knowledge base behind our public content: brand voice guidelines, audience profiles, competitor positioning, product messaging, proof points, and research. When I'm writing a blog post or preparing a talk, this is where I check that the messaging is accurate and consistent.

The internal wiki holds company operations, strategy, customer details, board prep, fundraising context, team planning. This one never touches external content. It's a reference that helps me make decisions and prepare for meetings.

Why two and not one? The audiences are different (public-facing communication vs. internal thinking), the confidentiality requirements are different (the internal wiki has customer names tied to deal sizes, runway figures, board material), and the blast radius is different. If I misconfigure a skill on the external wiki and it publishes something wrong, I fix a blog post. If I misconfigure the internal wiki, the stakes are higher. Separation by vault means the external wiki physically cannot reference internal-only data.

The Mac mini runs both continuously. File sync keeps the vaults available on my laptop and phone, but the wiki indexes stay on-device. Part 2 of this series goes into the harness infrastructure: scheduled tasks, remote control, and why a dedicated machine matters.

The template is on GitHub

I've published the template I use: github.com/bigfish24/llm-wiki-template

It includes a CLAUDE.md schema that defines wiki structure, frontmatter conventions, page types, and linking rules. Four base skills: ingest, query, lint, and create. A qmd setup for local hybrid search (more on that below). Standardized YAML frontmatter for products, audiences, competitors, topics, customers, and proof points. And index + log templates that keep the wiki navigable as it grows.

Fork it, drop your first source into raw/, run /ingest, and watch the wiki build itself.

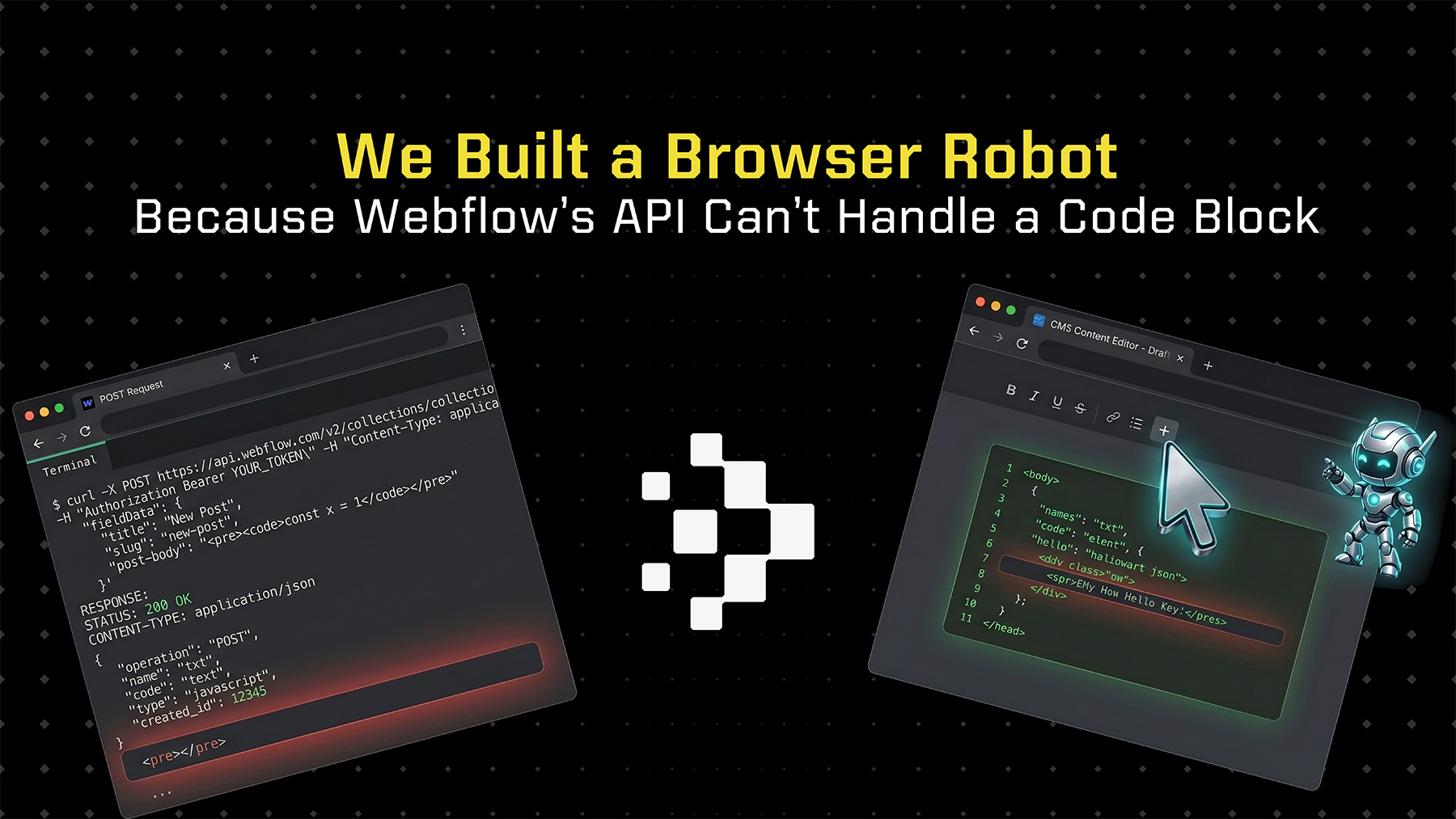

It works for marketing wikis, research libraries, book notes, investor memory, customer intelligence, whatever corpus you need an LLM to maintain. The schema is the important part. The content fills in. You can add domain-specific skills on top of the four base ones (I've added skills for research, content drafting, and publishing to Webflow), but the core ingest-query-lint loop is where most of the value is.

qmd: local hybrid search under the hood

Once you have more than a few dozen wiki pages you need search. Browsing stops working fast.

I use qmd, an open-source tool built by Tobi Lutke that runs entirely on-device. It provides three search modes: BM25 keyword search (fast, reliable, great for exact terms), vector semantic search (finds conceptually similar content even when the keywords don't match), and hybrid search with LLM reranking that combines both and uses a local LLM to rerank results by relevance.

The reranker is a Qwen3-Reranker-4B model, about 2.5 GB, running locally on the Mac mini. The entire search stack runs on the machine with zero cloud calls.

This matters for the internal wiki especially. When I query "what did we discuss with [customer] about [feature] last quarter," that query and those results never leave the device.

The reranker is already a local LLM running on consumer hardware, and that direction is accelerating. Part 4 of this series covers the local AI horizon in detail, but the search layer is where I first noticed what "local-first AI" actually feels like in practice. Fast, private, under my control.

Where this goes next

So that's the pattern and the setup. But the wiki doesn't run itself. Something needs to trigger the ingests, run the lints, produce the pre-briefs, and make the whole system useful from a phone at the airport.

Part 2 covers the harness: Claude Code's scheduled tasks for daily pre-briefs, Dispatch and Remote Control for mobile access, and why I run this on a Mac mini instead of a laptop.

Part 3 covers the trust question: why I chose Claude Code over OpenClaw for my company wiki, even though OpenClaw has 5,700+ community skills. When your wiki holds board material and customer details, the trust model matters more than the feature count.

Part 4 looks at the local AI horizon: Gemma 4, Qwen 3, OpenOats for local transcription, and why this rhymes with Ditto's own local-first thesis at the sync layer.

The template is on GitHub. Fork it, drop a source into raw/, run /ingest, and see what the wiki builds.